The brief history of artificial intelligence: The world has changed fast – what might be next? – Our World in Data

These rapid advances in AI capabilities have made it possible to use machines in a wide range of new domains:

When you book a flight, it is often an artificial intelligence, and no longer a human, that decides what you pay. When you get to the airport, it is an AI system that monitors what you do at the airport. And once you are on the plane, an AI system assists the pilot in flying you to your destination.

Several governments are purchasing autonomous weapons systems for warfare, and some are using AI systems for surveillance and oppression.

AI systems help to program the software you use and translate the texts you read. Virtual assistants, operated by speech recognition, have entered many households over the last decade. Now self-driving cars are becoming a reality.

In the last few years, AI systems helped to make progress on some of the hardest problems in science.

Large AIs called recommender systems determine what you see on social media, which products are shown to you in online shops, and what gets recommended to you on YouTube. Increasingly they are not just recommending the media we consume, but based on their capacity to generate images and texts, they are also creating the media we consume.

Artificial intelligence is no longer a technology of the future; AI is here, and much of what is reality now would have looked like sci-fi just recently. It is a technology that already impacts all of us, and the list above includes just a few of its many applications.

The wide range of listed applications makes clear that this is a very general technology that can be used by people for some extremely good goals – and some extraordinarily bad ones, too. For such ‘dual use technologies’, it is important that all of us develop an understanding of what is happening and how we want the technology to be used.

Just two decades ago the world was very different. What might AI technology be capable of in the future?

The AI systems that we just considered are the result of decades of steady advances in AI technology.

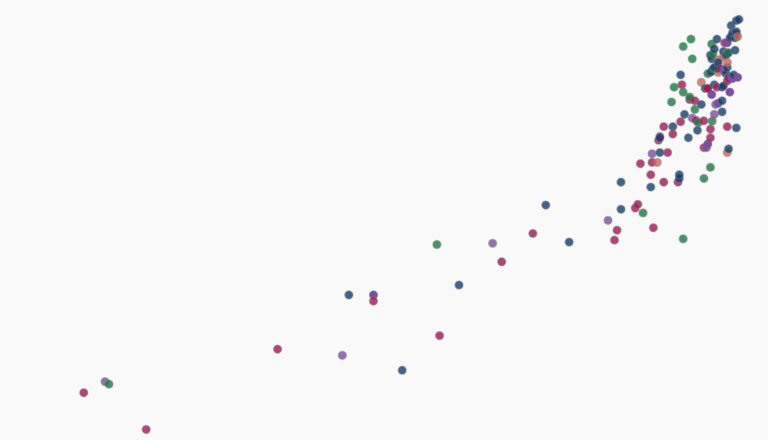

The big chart below brings this history over the last eight decades into perspective. It is based on the dataset produced by Jaime Sevilla and colleagues.7

Each small circle in this chart represents one AI system. The circle’s position on the horizontal axis indicates when the AI system was built, and its position on the vertical axis shows the amount of computation that was used to train the particular AI system.

Training computation is measured in floating point operations, or FLOP for short. One FLOP is equivalent to one addition, subtraction, multiplication, or division of two decimal numbers.

All AI systems that rely on machine learning need to be trained, and in these systems training computation is one of the three fundamental factors that are driving the capabilities of the system. The other two factors are the algorithms and the input data used for the training. The visualization shows that as training computation has increased, AI systems have become more and more powerful.

The timeline goes back to the 1940s, the very beginning of electronic computers. The first shown AI system is ‘Theseus’, Claude Shannon’s robotic mouse from 1950 that I mentioned at the beginning. Towards the other end of the timeline you find AI systems like DALL-E and PaLM, whose abilities to produce photorealistic images and interpret and generate language we have just seen. They are among the AI systems that used the largest amount of training computation to date.

The training computation is plotted on a logarithmic scale, so that from each grid-line to the next it shows a 100-fold increase. This long-run perspective shows a continuous increase. For the first six decades, training computation increased in line with Moore’s Law, doubling roughly every 20 months. Since about 2010 this exponential growth has sped up further, to a doubling time of just about 6 months. That is an astonishingly fast rate of growth.8

The fast doubling times have accrued to large increases. PaLM’s training computation was 2.5 billion petaFLOP, more than 5 million times larger than that of AlexNet, the AI with the largest training computation just 10 years earlier.9

Scale-up was already exponential and has sped up substantially over the past decade. What can we learn from this historical development for the future of AI?

Our World in Data presents the data and research to make progress against the world’s largest problems.

This article draws on data and research discussed in our entry on Artificial Intelligence.

This content was originally published here.